There’s so much to take in at SIGGRAPH these days, but a couple of events are becoming absolute ‘must-sees’. One of those is Real-Time Live! which is an evening of on-stage presentations of real-time tech – ie. it’s live, and anything can happen.

If you’re not already aware of how Real-Time LIVE! works, vfxblog spoke to chair Jesse Barker about how it all happens. And read on below the interview to see what presentations will make up the event on Tuesday, 14 August, 6-7:45 pm, at West Building, Ballroom AB at the Vancouver Convention Centre.

vfxblog: For someone who hasn’t seen Real-Time Live! before, can you explain what happens on the night?

Jesse Barker: Real-Time Live! is a live show featuring demonstrations and deconstructions of bleeding-edge, real-time graphics techniques from researchers and practitioners from around the world. Metaphorically, attendees get to see how the “sausage is made.” Because it’s live, sometimes things go a bit sideways, so what’s exciting is seeing how the presenters recover. The audience is always rooting for a successful presentation, which definitely benefits the presenters.

vfxblog: How are the Real-Time live! sessions chosen? How is the best presentation judged?

Jesse Barker: Real-Time Live! is a juried program. After a period of extensive outreach, the submissions we get are reviewed by a jury of accomplished researchers and practitioners. Those reviews are then used to determine which submissions are accepted into the show. This year, in the spirit of the live nature of the show — and it. is. a show. — we are working on enabling the audience to participate in voting via the conference app on their mobile devices. It should be an exciting finish!

vfxblog: I think people might understand what real-time means in terms of gaming, but how have you seen it permeate other areas such as filmmaking and immersive entertainment in recent times?

Jesse Barker: We’ve seen other areas evolve for quite a while now. There have always been real-time tools in filmmaking, but now we’re seeing people make beautifully rendered real-time films, two of which are being presented in this year’s Computer Animation Festival Electronic Theater (“ADAM: Episode 2” and “Book of the Dead”). And we’re not just talking about entertainment. At SIGGRAPH, we’ve slowly been witnessing a variety of immersive simulators for things like surgical procedures, driving, and flying that allow a great deal of realistic virtual practice. The whole spectrum of mixed reality is getting better all the time.

vfxblog: Since real-time has moved beyond gaming, what collaborations do you see have been happening or could further happen between real-time developers, creative content makers and other developers?

Jesse Barker: In addition to existing use cases getting better, as real-time graphics improve, I think there are a number of additional areas that will benefit. Design and simulation are areas that come to mind. Being able to iterate interactively on, for example, a car body design more rapidly — to see photorealistic results based on adjustments to the individual layers of paint and clear coat — is a very powerful tool. When you combine that kind of rendering power with something like a game engine, you have the ability to simulate real-world situations without putting people in danger.

vfxblog: Are you nervous during Real-Time Live!, since the presenters must present live on stage? How do you and the presenters deal with this?

Jesse Barker: I don’t know if I’m nervous (a bit, maybe J), but I think the key is preparation and having the support of the awesome show crew that handles stage management, audio/visual, and networking services that are critical to a successful live show like Real-Time Live!.

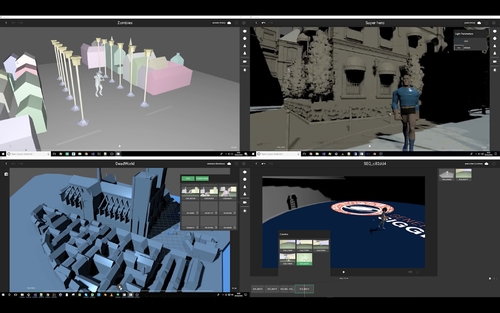

Real-Time Live! at SIGGRAPH 2018

IKEA Immerse Interior Designer

IKEA Immerse is available in select IKEA stores in Germany. This application enables consumers to create, experience, and share their own configurations in a virtual living and kitchen room set. With seamless e-commerce integration, a high level of detail, and real-time interaction, the VR experience represents an engaging, valuable touch-point.

Mixed Reality 360 Live: Live Blending of Virtual Objects into Live Streamed 360° Video

An interactive mixed reality system using live streamed 360° panoramic videos is presented. A live demo for real-time image-based lighting, light detection, mixed reality rendering, and composition of 3D objects into a live streamed 360° video of a real-world environment with dynamically changing real-world lights is shown.

Gastro Ex: Real-Time Interactive Fluids and Soft Tissues on Mobile and VR

Enter Gastro Ex for on smartphones and VR. The entire environment surrounding you is interactable and “squishy,” featuring advanced soft-body physics and 3D interactive fluid dynamics. Grab anything. Cut anything. Inject anywhere. Unleash argon plasma. Enjoy emergent surgical gameplay, rendered with breathtaking real-time GI and subsurface scattering.

Democratising Mocap: Real-Time Full-Performance Motion Capture with an iPhone X, Xsens, IKINEMA, and Unreal Engine.

Kite & Lightning reveals how Xsens inertial mocap technology, used in tandem with an iPhone X, can be used for full body and facial performance capture – wirelessly and without the need for a mocap volume – with the results live-streamed via IKINEMA LiveAction to Unreal Engine in real time.

Deep Learning-Based Photoreal Avatars for Online Virtual Worlds in iOS

A deep learning-based technology for generating photo-realistic 3D avatars with dynamic facial textures from a single input image is presented. Real-time performance-driven animations and renderings are demonstrated on an iPhone X and we show how these avatars can be integrated into compelling virtual worlds and used for 3D chats.

Oats Studios VFX Workflow for Real-Time Production with Photogrammetry, Alembic, and Unity

Come see how Oats Studios modified their traditional VFX pipeline to create the breakthrough real-time shorts ADAM Chapter 2 & 3 using Photogrammetry, Alembic, and the Unity real-time engine.

Virtual Production in ‘Book of the Dead’: Technicolor’s Genesis Platform, Powered by Unity

We demonstrate a Unity-powered virtual production platform that pushes the boundaries of real-time technologies to empower filmmakers with full multi-user collaboration and live manipulation of whole environments and characters. Special attention is dedicated to high-quality real-time graphics, as evidenced by Unity’s “Book of the Dead.”

The Power of Real-Time Collaborative Filmmaking

PocketStudio is designed to allow filmmakers to easily create, play, and stream 3D animation sequences in real time using real-time collaborative editing, a unified workflow, and other real-time technologies, such as augmented reality.

Wonder Painter: Turn Anything into Animation

Xiaoxiaoniu’s unique patented Wonder Painter™ technology turns anything into a vivid cartoon animation at a click of your camera. First, draw something, make something (clay, origami, building blocks, etc.), or find something (toy, picture book, etc.). Then take a photo of it and see it come alive!

The ‘Reflections’ Ray-Tracing Demo Presented in Real Time and Captured Live Using Virtual Production Techniques

Epic Games, Nvidia, and ILMxLAB would like to present 2018’s GDC demo, “Reflections,” set in the “Star Wars” universe. In addition, we will record a character performance live using virtual production/virtual reality directly into Unreal Engine Sequencer, and then play the demo with real-time ray tracing live at 24fps.

Find out more at SIGGRAPH’s website.